INTRODUCTION

Attackers have figured out something clever, why bother battering down a locked door when you can just walk in through the one your trusted friend already opened for you? Instead of going head-to-head with hardened cyber defenses of a company, they quietly compromise the software, libraries, and third-party vendors that organizations already rely on. So when the next routine update rolls in, nobody raises an eyebrow because it looks completely legitimate. And just like that, the backdoor gets installed by the organization itself.

That’s the unsettling elegance of a supply chain attack: it doesn’t exploit your distrust, it exploits your trust.

"WHY ATTACK THE FORTRESS WHEN YOU CAN POISION THE WELL THAT FEEDS IT ?" Case Study 1

Now, there are quite a few ways an attacker can inject malicious code into a supply chain. You could pose as a legitimate contributor and sneak something malicious into a pull request. You could social engineer your way into a maintainer’s account and push changes like you own the place, because for that moment, you kind of do. You could go after the CI/CD pipeline of a dependency, turning the build process itself into a weapon. Or you could set up a mirror or alternative repository and just wait for someone to pull from the wrong source.

All of these work. All of these have been done.

But the one that genuinely stopped me in my tracks was pulled off by Alex. What makes it extraordinary isn’t some zero-day exploit or sophisticated malware. It’s OSINT and patience. That’s it. Just open-source intelligence and the willingness to wait.

And that’s exactly what we’re diving into today, a case study on how trust, time, and a little bit of research can be all it takes to compromise Tech Giants.

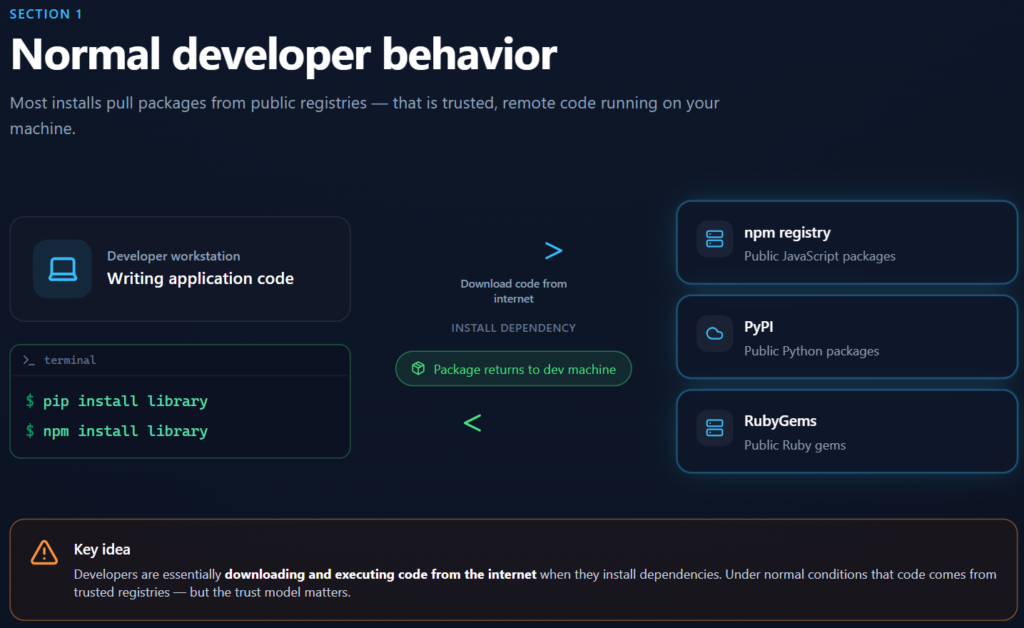

How Developers Normally Install Packages

Modern software development runs on third-party libraries. Need to parse a date, handle authentication, or process an image? There’s a library for that, and you’re probably one command away from having it on your machine.

pip install <package-name>

npm install <package-name>Simple, fast, and incredibly convenient. These commands reach out to public repositories like npm for Node.js, PyPI for Python, or RubyGems for Ruby, and pull down exactly what you need in seconds. This is the foundation that modern development is built on.

But here’s what’s actually happening under the hood when you run that command, you’re essentially telling your machine: “Go find some code on the internet, download it, and run it. I trust it.”

And most of the time, that’s fine. The ecosystem works, developers ship faster, and nobody reinvents the wheel. But that convenience comes with a quiet trade-off every dependency you install is a piece of code written by someone you’ve probably never met, hosted on a platform you don’t control, and executed with the same level of trust as your own code.

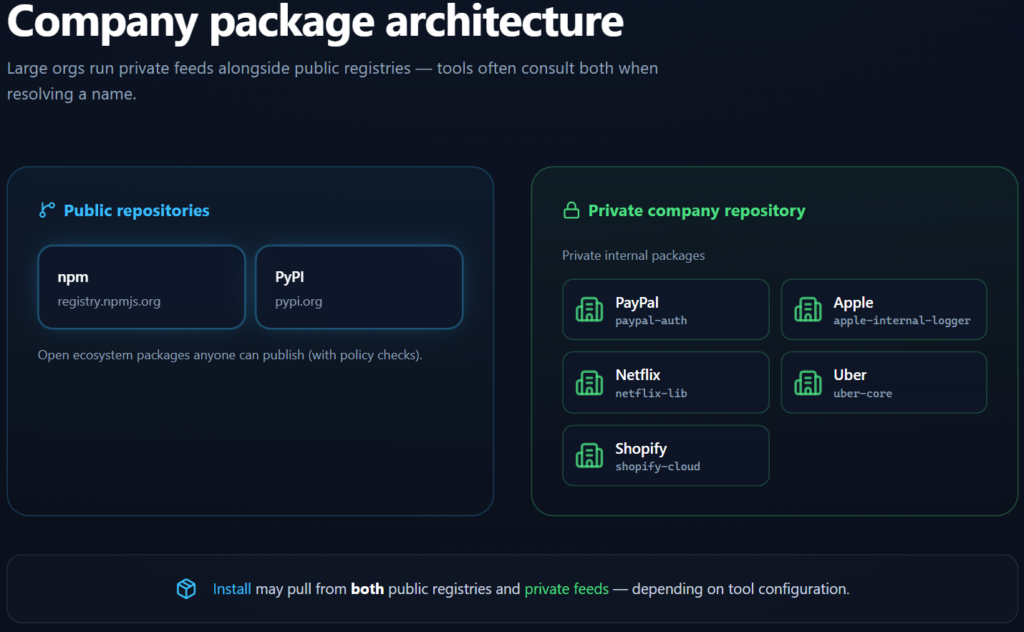

The Core Problem: Public vs Private Packages

Companies like PayPal, Apple, Netflix, Uber, and Shopify are running software at a scale most of us can barely imagine and to do that, they rely on two very different kinds of packages.

The first kind you’ve probably already met. Public packages = pulled straight from open repositories like npm or PyPI, these are the building blocks that pretty much every developer on the planet has access to.

THINGS LIKE :

express

react

NumPyBut here’s where it gets interesting. These companies also build their own internal libraries, tools and packages crafted specifically for their infrastructure, their systems, their needs. You’ll never find these on PyPI or npm, because they were never meant to be there. They live inside the company’s private repositories.

THINGS LIKE :

paypal-auth

apple-internal-logger

shopify-cloudSo on one side, you have the public ecosystem that the whole world shares. On the other, a private layer that’s invisible to outsiders. Two worlds, running side by side. Now, what happens when an attacker figures out the name of one of those private packages?

DEPENDENCY CONFUSION ATTACK BY ALEX BIRSAN

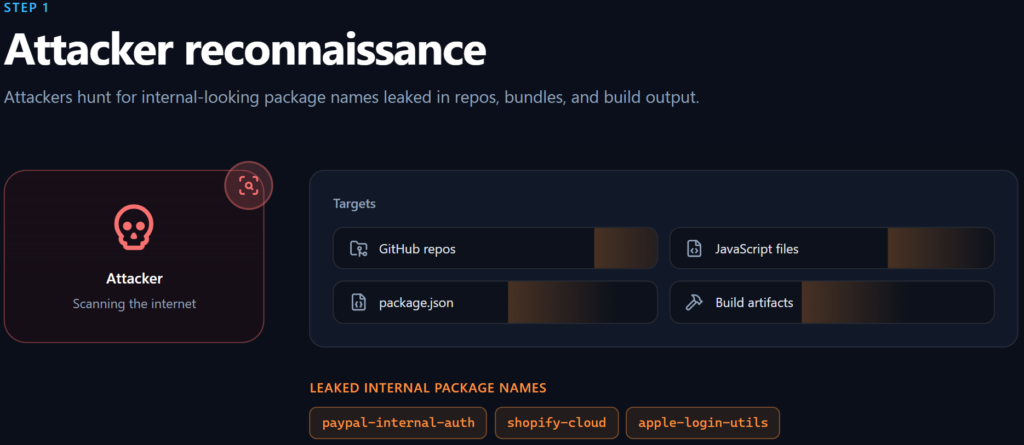

STEP 1: RECONNAISSANCE FOR INTERNAL PACKAGE NAMES

Alex wasn’t trying to brute-force his way into anything. He was just looking. Searching the open internet for something that companies sometimes have to leave out in the open “internal package names”. And the places you’d be surprised to find them? GitHub repositories, compiled JavaScript files, package.json files, build artifacts. Piece by piece, the picture started forming. References to packages like:

paypal-internal-auth

shopify-cloud

apple-login-utilsNow here’s the thing, none of these packages existed publicly. You couldn’t go to npm or PyPI and find them. They were private, internal tools, living inside these companies own infrastructure. But they were referenced in code that had quietly made its way to the surface and that reference alone was enough.

Because knowing the name of something that doesn’t exist publicly is surprisingly powerful. It tells you that somewhere, inside a very large and very trusted company, something by that name is being used. And if it doesn’t exist on open source public registry yet, well, what’s stopping someone from creating it? That’s exactly where Alex went next.

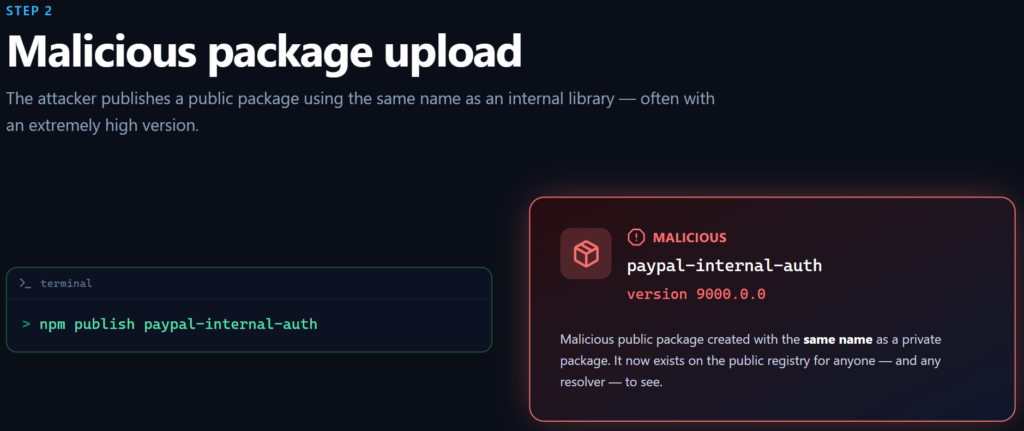

STEP 2: PUBLISHING A MALICIOUS PACKAGE

And this is where the magic trick happens. Alex took those internal package names he’d dug up and simply… uploaded them. To the public registry. One command was all it took:

npm publish paypal-internal-authJust like that, a package named paypal-internal-auth now existed on npm, publicly available, ready to be downloaded by anyone, or anything. Except this version wasn’t PayPal’s. It was Alex’s. And it was carrying malicious code wrapped in a familiar, trusted name. No hacking. No brute force. Just a name that was already being trusted, now pointing somewhere it shouldn’t.

STEP 3: CONFUSING THE BUILD SYSTEM

Here’s where the trap snaps shut.

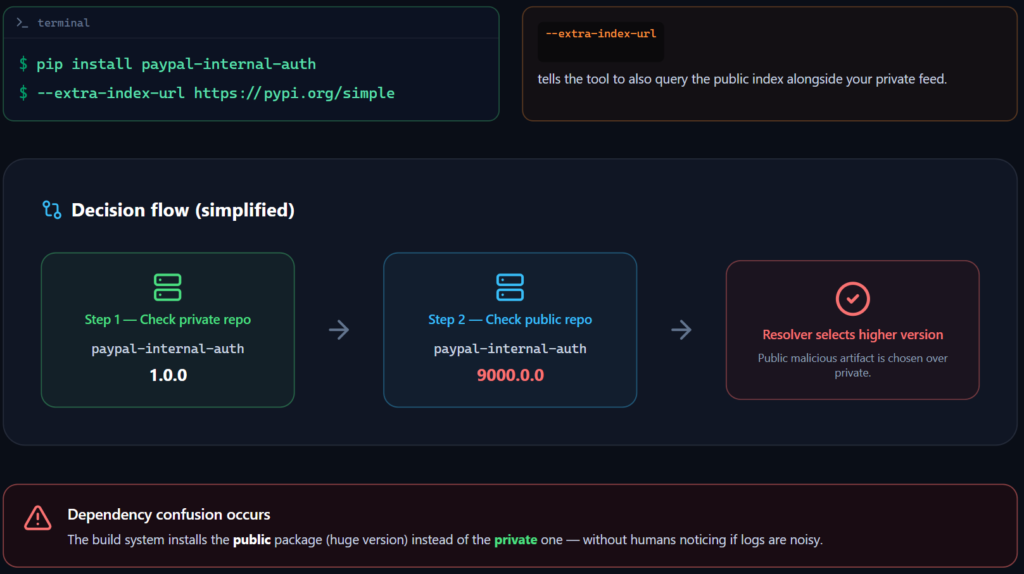

A lot of corporate build pipelines are configured to do something completely reasonable. Check multiple sources for a package. First the private internal repo, then the public one. Sounds safe enough, right? But there’s a catch. Many of these systems don’t just check both sources, they prioritize by version number. Highest version wins. So Alex published his malicious package as:

paypal-internal-auth 9000.0.0An absurdly high version number. And the build system, doing exactly what it was designed to do, looked at both options and thought, well, 9000.0.0 is clearly newer than whatever’s in the internal repo. So it grabbed the public one. Alex’s one.

No alarms. No prompts. No human decision-making involved. Just an automated system following its own logic straight into a trap. That’s dependency confusion, and it’s beautiful in the worst possible way. The vulnerability isn’t in the code. It’s in the assumption that a higher version number means a more legitimate one.

STEP 4: MALICIOUS CODE EXECUTION

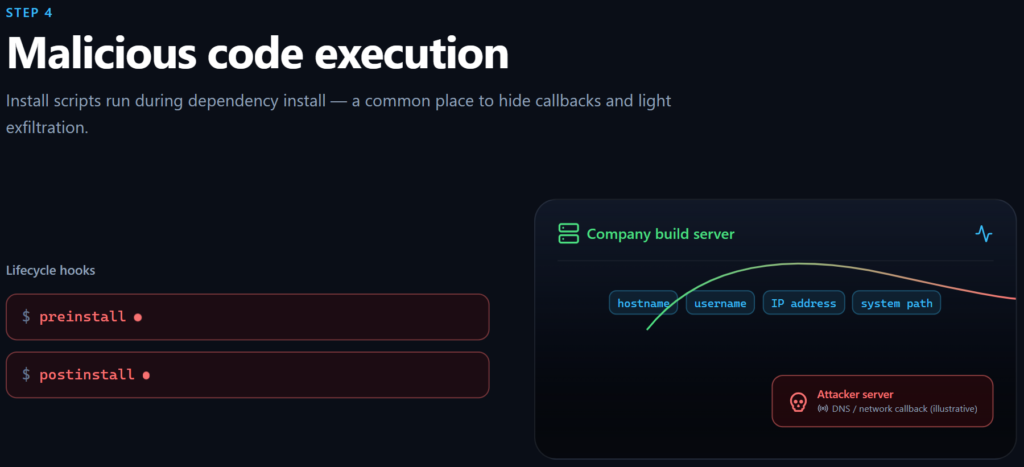

Now here’s where it all comes together, honestly, this is the part that should make any security team deeply uncomfortable. Package managers like npm and pip have a feature that’s completely legitimate and useful in the right hands. they allow scripts to run automatically during installation.

Hooks like preinstall & postinstall fire the moment a package lands on a system, no user interaction required. Alex’s malicious package had these scripts baked right in. So the second those build systems pulled down his package, and remember, they did it automatically, confidently, because the version number said so hence the preinstall code ran. Just like that. No clicks, no prompts, no human in the loop.

For the purposes of this research, Alex kept it relatively tame. The scripts collected basic system information like hostname, username, system path, IP address and quietly sent it back to his server via DNS requests. Nothing destructive. Just a proof of concept.

But the proof was loud enough. The systems belonged to Apple. Microsoft. Netflix. Uber. Shopify. PayPal. Some of the most well-resourced, security-conscious companies on the planet had just unknowingly run a stranger’s code inside their own infrastructure not because they were careless, but because their systems were doing exactly what they were built to do. That’s what makes this so unsettling. The attack didn’t beat their defenses. It used them.

Why This Attack Is Dangerous, And Why It Actually Worked

Alex played it responsibly. But a real attacker with the same technique and worse intentions? The damage could’ve been catastrophic. We’re talking stolen secrets, exposed API keys, backdoors quietly inserted into production builds, compromised developer machines, tampered software releases. Not hypothetically but practically. Because the access was already there.

And the scariest part is understanding why it worked, because the answer isn’t “these companies were sloppy.” It’s almost the opposite. Three things lined up to make this possible:

- First, internal package names had quietly leaked into public spaces like GitHub repos, build artifacts, JavaScript files. Nobody flagged it because a name, on its own, doesn’t look like a vulnerability.

- Second, tools like npm and pip were configured to check both private and public registries, which is completely standard practice. Nothing unusual there.

- Third, when those tools saw a higher version number on the public registry, they followed their own logic and grabbed it, because that’s what they were designed to do.

No single one of these is a glaring mistake. But together? They formed a perfect chain. A leaked name gave Alex a target. A misconfigured resolver gave him a path. And a version number gave him priority. That’s what makes dependency confusion so particularly nasty as an attack vector, it doesn’t exploit a bug. It exploits the system working exactly as intended.

CONCLUSION

Supply chain attacks are unsettling precisely because they don’t ask for your password, they don’t need to trick you into clicking a suspicious link, and they definitely don’t announce themselves. They just quietly piggyback on the tools, libraries, and workflows you already trust and walk right through the front door you held open for them.

The modern software ecosystem is genuinely incredible. The ability to build powerful, complex systems on top of decades of shared, open-source work is one of the greatest advantages developers have today. But that same interconnectedness is also the attack surface. Every package you install is a handshake with a stranger. Most of the time, it goes fine. But “most of the time” isn’t a security strategy.

What supply chain security ultimately asks of us isn’t paranoia, it’s awareness. Knowing where your code comes from, what it’s allowed to do, and whether the systems delivering it to you are doing so safely. Because the threat isn’t always a black hoodie figure trying to break down your walls. Sometimes it’s a version number and a leaked package name.

REFERENCE

- Dependency Confusion: How I Hacked Into Apple, Microsoft and Dozens of Other Companies: here